|

The Cluster Collaboration

Project

Technical

>Sloan v Cousins

|

Transforms between Sloan and Cousins for V-I

In some of our work we have used the Sloan i filter in preference to Cousins I, to cut down the night sky fringing. We have, however, tied this back to the Landolt standards, to produce a V-I colour. There are already papers which compare the Sloan system to the Cousins system, where both have been calibrated using their "natural" standards ( Fukugita et al, Smith et al), but nothing which uses a mixed system such as ours. Our concerns were that there is a relatively large colour term in our transformations (~1.2, rather than 1.0), and there might be a systematic deviation with colour.

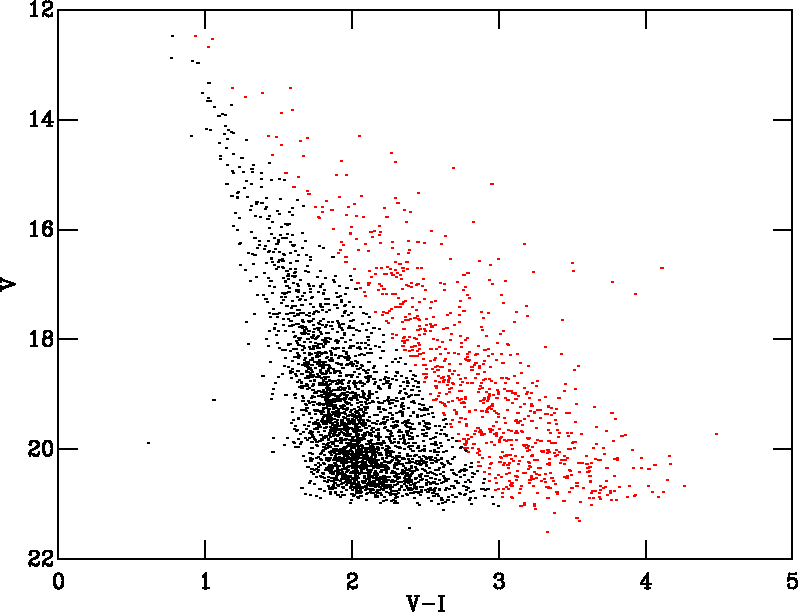

To examine this was have compared the V-I data we obtained for Cep OB3b in Pozzo et al (2003) with a Cousins I filter, to the newer data used in Burningham et al 2005, which was obtained using a Sloan i filter, and which for this work we calibrated to give V-I. The colour-magnitude diagram on the left shows the stars in common between the two datasets, with a photometrically defined pre-main-sequence in red.

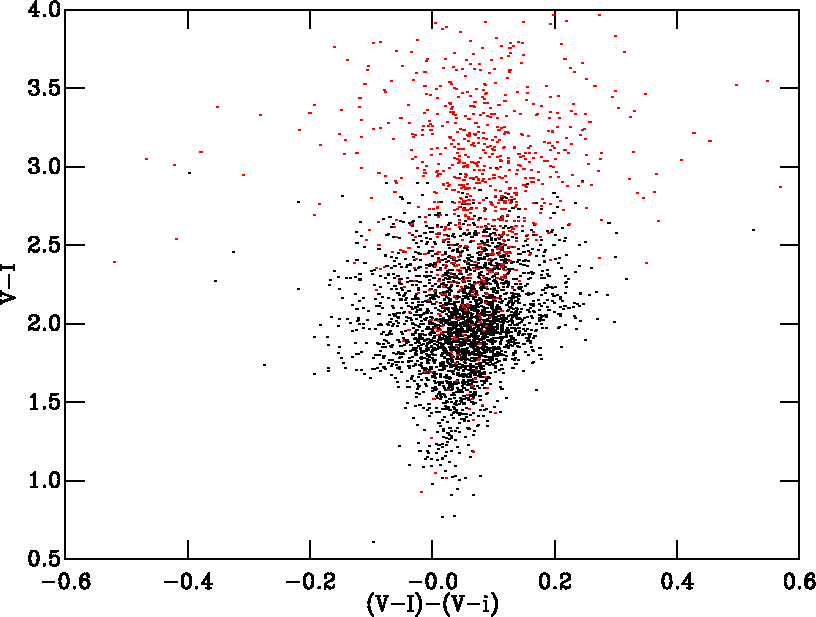

This diagram shows the differences bewtween the two photometric

systems, where i should be understood to mean data taken through a Sloan

i filter but tied to the Laondolt standards.

The data can be represented by the following linear fit.

(V-i) = 0.971(V-I) + 0.008

The pre-main sequence stars (in red) do not show a significantly different trend from the others. But, the caveat is that the reddest standard in our V-i calibration is 2.87, and in V-I 2.5.The reason for the difference is almost certainly the relatively high extinction to the field (approximately 3 mags in V). Our standard star calibration should have ensured that a Landolt standard at V-I=3 (which will be a cool star) comes out at the right colour. But a highly reddened star has a different spectrum, and though it might be V-I=3 with the Cousins filter, it is obviously slightly different when measured with the Sloan one.

If you want to try other descriptions of the data, you can download either the entire catalogue or just the PMS seclection in our usual cluster format.